A brand new paper from researchers particulars “proxies” as a key interface idea for blended actuality headsets.

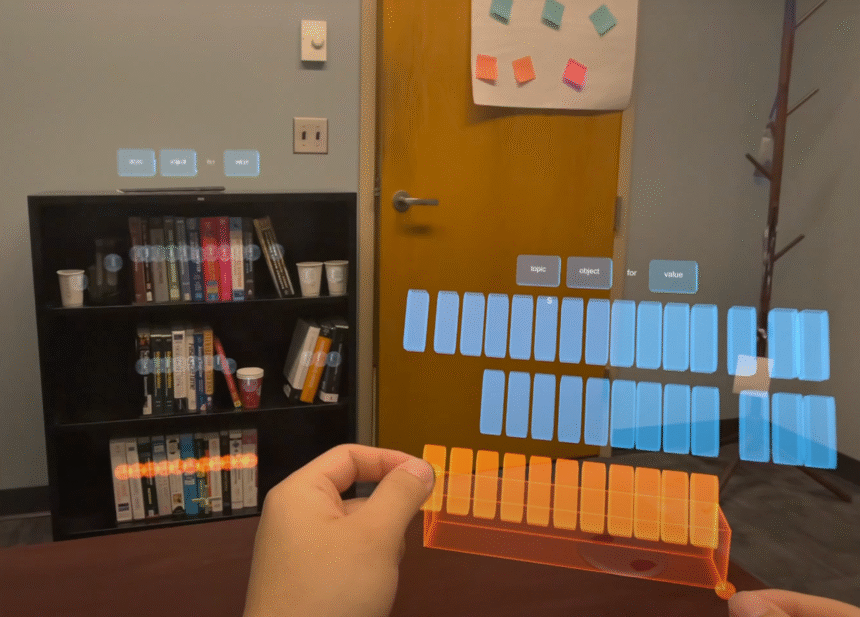

The thought from researchers related to Google and the College of Minnesota makes use of proxies to bridge the hole between the house in arm’s attain and faraway objects. The thought, explored by their system Actuality Proxy, might see a headset’s cameras utilized in tandem with AI, present mapping information, and person enter to create near-instantaneous dollhouse-scale representations of areas of curiosity within the bodily surroundings. Concurrently, the bodily objects represented by the near-field proxy might be outlined within the background to point out what the person is choosing.

“If AI goes to allow people of their daily duties it most likely will likely be through XR,” wrote researcher Dr. Mar Gonzalez-Franco on Bluesky. “The difficulty then is that if a range has real-world penalties, we are going to want nice precision to work together.”

The idea might make it trivial to seize a digital copy of a bodily e book out of your bookshelf, as an example, saving you a visit from the sofa. If that is too pedestrian a use of headsets for you, the identical concept may very well be prolonged to the administration of drone swarms, choosing them in house by dragging a dice over them as if they’re items in a Command & Conquer recreation. You might additionally see your complete path by a constructing outlined in miniature earlier than you step inside.

The paper’s authors recommend the intention for his or her system is “to facilitate the interplay with actual objects past attain in MR whereas preserving the pure psychological mannequin of direct manipulation. We suggest to seamlessly shift the interplay goal from the article to its summary illustration, or proxy, throughout choice.”

The thought might allow “customers to work together extra successfully with objects which might be crowded, distant, or partially occluded. Augmented by AI, Actuality Proxy additional helps superior MR interactions—corresponding to multiselection, semantic grouping, and spatial zooming—utilizing intuitive direct manipulation gestures.”

The paper is co-authored by Xiaoan Liu, Difan Jia, Xianhao Carton Liu, Mar Gonzalez-Franco, and Chen Zhu-Tian, and it is out there on-line submitted as a part of the ACM UIST Convention hosted in Korea on the finish of September.